DeepMind's JumpReLU SAE peers inside the LLM black box

DeepMind's new sparse autoencoder technique helps better understand and control the behavior of LLMs.

One of the big challenges of large language models (LLMs) and deep neural networks in general is understanding how they make decisions, often referred to as the “black box” problem. It is very difficult to map the activations of billions or trillions of parameters in the LLMs to specific concepts or behaviors, thus making it difficult to have granular control over the outputs.

One popular technique to investigate the behavior of LLMs is the sparse autoencoder (SAE). Sparse autoencoders (SAE) have recently become a hot topic of discussion lately as major AI labs are using them as tools to explore the inner workings of LLMs. And DeepMinds JumpReLU SAE is the latest technique in the collection of such methods.

SAE is a variant of the autoencoder architecture. Autoencoders map a set of input features to an intermediate representation and then try to reconstruct the original features. This mechanism helps them learn latent representations that can be used for different tasks, such as compression, style transfer, and denoising.

SAEs add an additional constraint to the vanilla autoencoder in that they force the model to only activate a small number of the intermediate features for each input. Sparsity helps interpret the original features. SAEs must find the right balance between sparsity and reconstruction fidelity. Too much sparsity will prevent the SAE model from learning to reconstruct the original features. On the other hand, too little sparsity will make it difficult to interpret the input features.

In the context of LLMs, SAEs receive the activations of the layers as input (attention, dense layer outputs, residual streams), and try to learn their sparse representations.

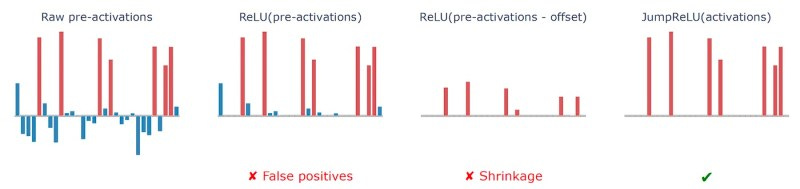

DeepMind’s JumpReLU SAE to learn better sparse representations of the activations of LLM layers. Instead of assigning a global threshold for sparse features like ReLU, JumpReLU uses a learned vector that assigns a threshold value to each individual feature. This enables JumpReLU SAE to find a better tradeoff between sparsity and reconstruction fidelity.

Experiments show that JumpReLU matches and outperforms other state-of-the-art SAE techniques. It can be an important tool in understanding and controlling the output and behavior of LLMs, especially as they grow bigger and more capable.

SAEs can be very important in future applications of LLMs. For example, they can help develop techniques that prevent LLMs from generating harmful content such as malicious code even if it is jailbroken through carefully designed prompts. SAEs can also give more granular control over the responses of the model such as making them funnier, more technical, etc.

Read more about JumpReLU SAE on VentureBeat

Read the JumpRELU paper on Arxiv