UI-JEPA: Designed by Apple, inspired by Meta, built on Microsoft

UI-JEPA is an example of why knowledge sharing and open source works

Researchers at Apple recently introduced UI-JEPA, a model that can analyze user intent by processing sequences of user interface screenshots. At 4.4 billion parameters, UI-JEPA outperforms frontier models in understanding user intent, and it does so with a fraction of the model size and without the need to send data to the cloud.

I’m going to get into how it works in a bit, but let’s just take a moment to appreciate the great things that happen when we share knowledge (something that is, unfortunately, becoming rare in the current profit-driven state of the AI industry):

UI-JEPA is inspired by Joint Embedding Predictive Architecture (JEPA), an architecture that was first discussed by Meta AI chief Yann LeCun. And in implementing it, the researchers used Phi-3, an open-source small language model developed by Microsoft. I hope we get to see more knowledge-sharing in the future.

With that out of the way, here is how UI-JEPA works:

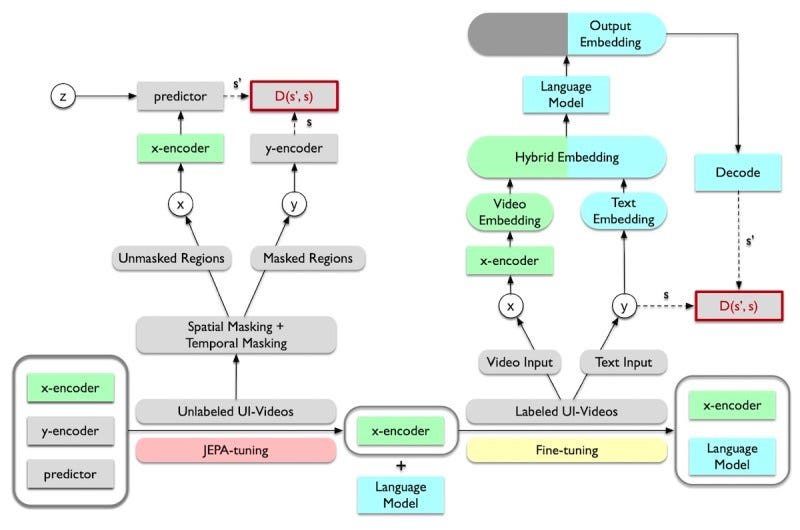

JEPA models try to predict missing pieces of the input data, whether they be masked parts of images or future video frames. JEPA uses self-supervised learning and does not need annotated data. However, instead of trying to make pixel-level predictions, JEPA models learn high-level semantic representations. This makes the models more efficient.

During training, a UI-JEPA encoder is trained on sequences of UI interactions. Once trained, the UI-JEPA encoder is combined with a Phi-3 language model, which is fine-tuned to generate textual descriptions from the embeddings.

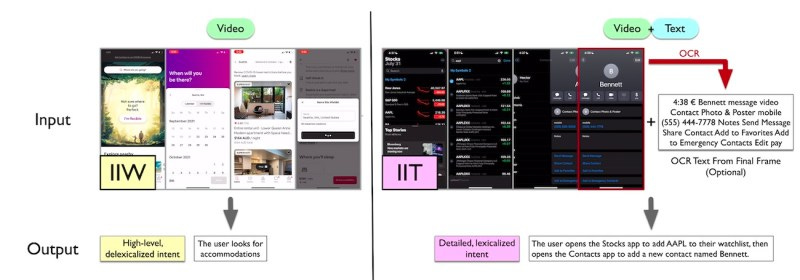

The researchers released Intent in the Wild (IIW) and Intent in the Tame (IIT), two new datasets and benchmarks to evaluate the capabilities of models in understanding user intent.

They compared UI-JEPA to other types of video encoders as well as frontier models such as GPT-4 and Claude-3 Sonnet. On both datasets, UI-JEPA outperformed all models when given few-shot prompts. On single-shot prompts, it was better than other open source models but fell short of Claude-3 Sonnet.

There are several uses for UI-JEPA models, including creating automated feedback loops for AI agents to enable them to learn continuously from interactions without human intervention.

With its low memory and compute footprint, UI-JEPA could become an important part of Apple’s on-device AI strategy.

Another potential application is using UI-JEPA as a perception agent in agentic frameworks, capturing and storing user intent at various time points.

Read more about UI-JEPA along with comments from the paper’s authors on VentureBeat

Read the paper on arXiv.

Can we please get a live overlay of this for all political speeches in the future?