Why would we need a DOOM-hallucinating model?

Google's GameNGen is an interesting experiment. But it won't replace real game engines anytime soon.

Google Research recently presented GameNGen, a diffusion model that can generate real-time DOOM frames and respond to player actions. The model is fairly consistent in predicting the next frame, while keeping track of objects, monsters, and player stats such as health, armor, and ammo.

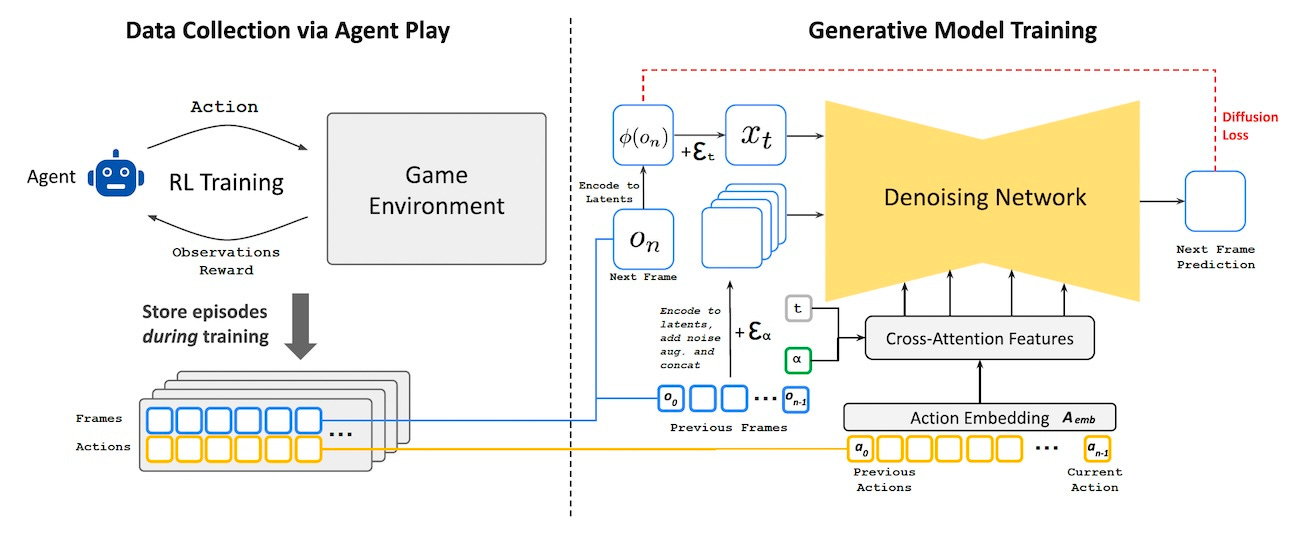

To create the model, the researchers first trained a reinforcement learning agent to play the game like a human. They then used the RL agent to generate millions of action-frame pairs to train the diffusion model. The base model they used is Stable Diffusion 1.4, though they modified it to be conditioned on the sequence of past frames and user actions to predict the next one.

GameNGen first learns a latent representation of the game elements and then learns to diffuse those into fully rendered frames. This latent representation is important because it basically compresses the game’s objects, stats, and everything else into a small vector space.

On a TPU V5 accelerator, the model can generate 20 frames per second with impressive consistency. Human evaluators who viewed 1.6- to 3-second videos could only guess the GameNGen-generated ones slightly above random.

Following the release of the GameNGen paper, I’ve seen a fair amount of discussion on social media about this being a new paradigm that might replace traditional game engines. (And to be fair, the researchers were not very clear throughout the paper as to what kind of applications this kind of model could serve, though they mention a few at the end that are mostly not related to creating a full neural-based game engine.)

As someone who has done a good bit of work in both game development and machine learning, I beg to differ for a few reasons:

1- DOOM is a very simple game: DOOM is arguably one of my favorite games and I spent countless hours playing it on my 486 DX4 back in the 90s. But it is a very simple game. It uses billboards instead of 3D objects composed of hundreds of thousands of polygons, its graphics are very crude compared to what is available today, and it uses a small set of textures across the game. A large enough neural network would be able to learn a latent representation of all the games elements and their interactions.

2- Not everything happens on camera: Again, DOOM is a simple game where most things only happen when the player is actively present. But in most games, a lot of things happen off-camera even when the player is not looking. These kinds of dynamics require a real game engine that keeps track of objects that have never been seen by the player or have been off-camera for a while. Otherwise put, most games are not perfect information environments. In the paper, the researchers admit that the model only remembers a few seconds of game information and scaling the model did not solve the problem. They conclude that getting more consistent results might require architectural changes (maybe combining it with a real game engine?).

3- Generative models are unpredictable: While randomness can make a game more fun and playable, players expect some kind of consistency and predictability from the environment. GameNGen has been trained on a distribution of player behaviors. Deviating from that behavior can cause wild fluctuations in the model’s behavior. Imagine your health dropping to zero because you approach a wall from an angle that is very different from what the model has seen during training.

4- Generative models are very inefficient: It is impressive to see a generative model predict a game in real-time. But DOOM is running on all kinds of resource-constrained devices, including toothbrushes and flowerpots. GameNGen needs a TPU V5 chip to run, which costs several dollars per hour, and was trained on 128 TPU V5e chips, which costs hundreds of dollars per hour.

All this said, I do not want to undermine the impressive results of the experiment. It shows how far you can push current models to learn new things. GameNGen demonstrates the potential of neural networks to learn and simulate complex environments in real-time. As we have often seen, the technologies we create end up solving problems that are different from the ones they were intended for.

Read more about how GameNGen works on TechTalks.

See GameNGen’s website, which includes a link to the full paper.