A generative model that creates consistent images of the same object

When we humans see an object, we can usually imagine with decent precision what the object will look like from other angles. But diffusion models, the deep learning architecture used in DALL-E and Stable Diffusion, continue to face serious challenges in maintaining feature consistency across different views.

SyncDreamer, a new technique developed by various academic institutions, makes progress on this problem by using a special type of diffusion model that generates multiple views of an object at the same time and learns to synchronize the feature distribution of different view angles.

Key findings:

Diffusion models learn to generate images through noising and denoising

Diffusion models can create highly detailed 2D images but can’t remain consistent across different views

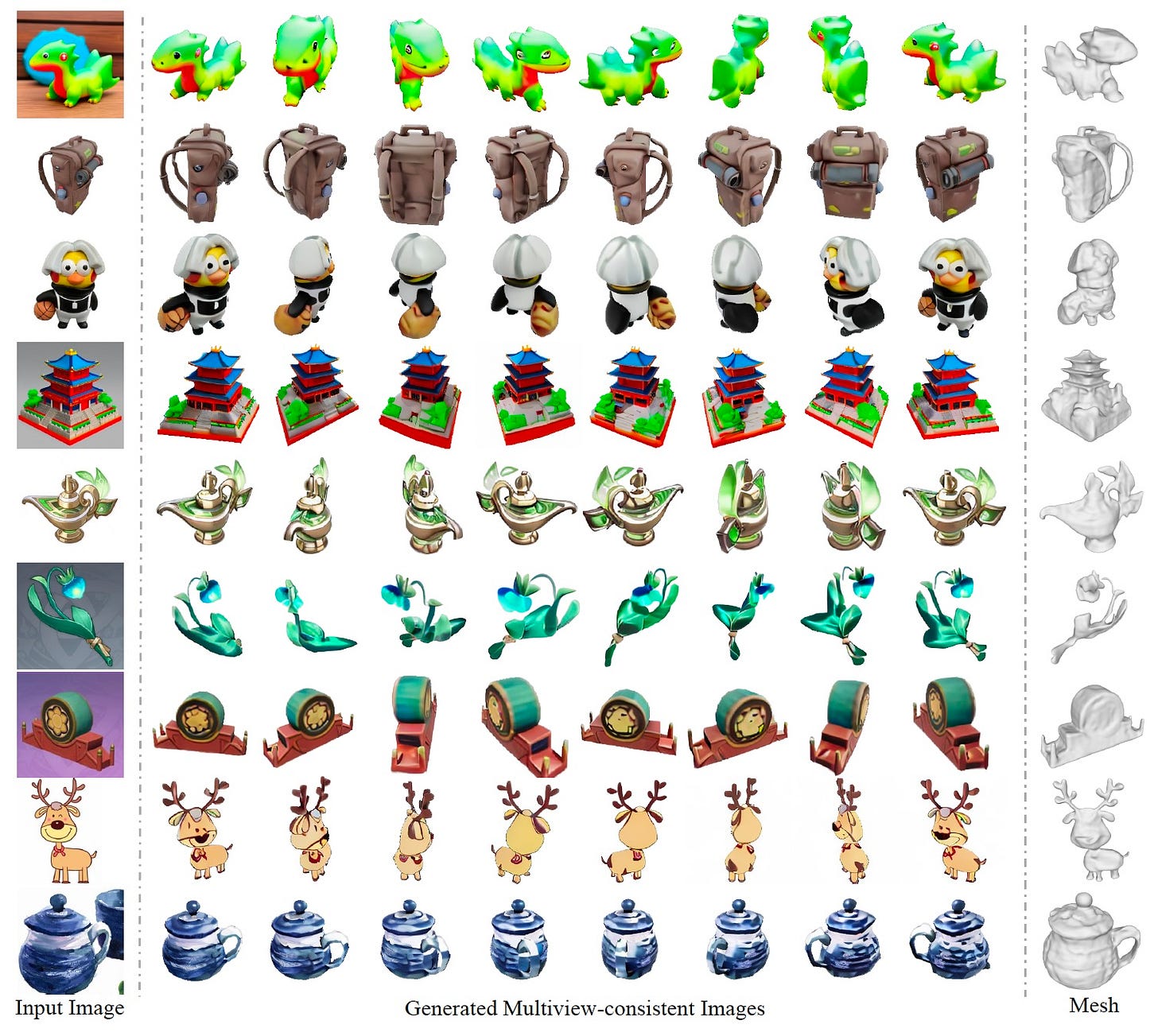

SyncDreamer is a model that takes a single 2D image and outputs multiple images of the same object from different angles

The key to SyncDreamer’s design is training a diffusion model that learns the feature distributions of different angles simultaneously

SyncDreamer uses 3D convnets, UNets, and diffusion to embed features in 3D space and ensure the generated images remain consistent across different angles

The output of SyncDreamer can be given to a 3D generative model such as NeRF or NeuS to create highly detailed 3D objects

As input, SyncDreamer can also take an image created by a text-to-image model such as input

This pipeline can help game developers quickly iterate on different design ideas and create 3D asset prototypes that can then be refined by an artist

Read the full article on TechTalks.

For more on generative AI:

Recommendations:

An excellent platform for working with ChatGPT, GPT-4, and Claude is ForeFront.ai, which has a super-flexible pricing plan and plenty of good features for customizing models and creating workflows.

Transformers for Natural Language Processing is an excellent introduction to the technology underlying LLMs.