Adversarial training is not safe for mobile robots

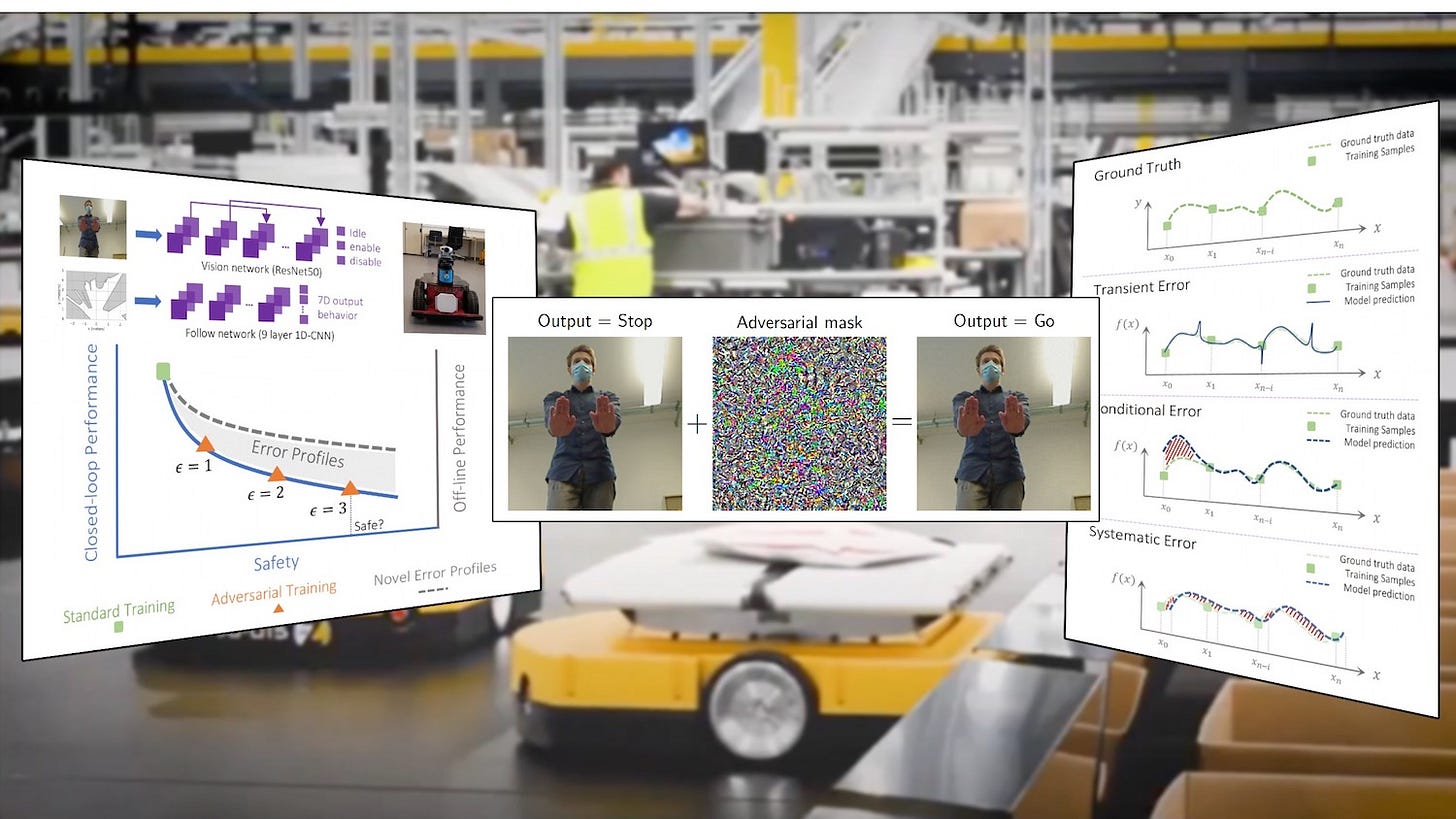

Adversarial attacks have become a common concern in deep learning. Machine learning engineers train their deep neural networks on adversarial examples to make them less sensitive to adversarial perturbations.

But the same process, called “adversarial training,” can cause unwanted side effects when applied to robotics. According to research by scientists at the Institute of Science and Technology Austria, the Massachusetts Institute of Technology, and Technische Universitat Wien, Austria, adversarial training reduces the safety of neural networks and creates new error profiles in robotics applications.

In a paper titled “Adversarial Training is Not Ready for Robot Learning,” the researchers explore the negative impact of adversarial training on autonomous robots and argue that the field needs new perspectives to address robustness and safety of neural networks.

In my latest column on TechTalks, I reviewed the paper and talked to the researchers about their work. Read the full article here.

For more on adversarial attacks:

Machine learning adversarial attacks are a ticking time bomb

Image-scaling attacks highlight dangers of adversarial machine learning

You can read our full coverage of adversarial machine learning here.