RAFT fine-tunes LLMs for better RAG performance

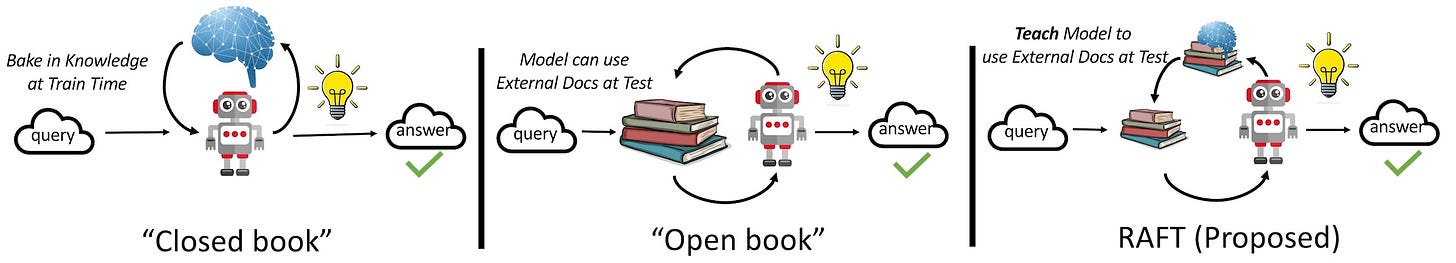

Retrieval Augmented Fine Tuning (RAFT) combines supervised fine-tuning with RAG to improve LLM domain knoweldge and ability to use in-context documents.

Researchers at the University of California, Berkeley, have released Retrieval Augmented Fine Tuning (RAFT), a new technique that optimizes LLMs for retrieval-augmented generation (RAG) on domain-specific knowledge.

RAFT uses simple but effective instructions and prompting techniques to fine-tune a language model in a way that helps it both gain knowledge on the specialized domain and be able to better extract information from in-context documents.

If RAG is “an open-book exam without studying” and fine-tuning is a “closed-book exam” where the model has memorized information or answers questions without referencing the documents, then a RAFT-trained model is like a student who has studied the topic and is sitting at an open-book exam. The student will have ample knowledge of the topic and will also know how to get more precise information from available documents.

Experiments show that RAFT models outperform all other baseline methods in customizing LLMs for domain-specific applications. Particularly interesting is the prompting and reasoning techniques used to train models in a way that improves both domain knowledge and in-context learning.

Read all about RAFT on TechTalks.

For more on AI research: