TechTalks: The best stories of 2021

As we wrap up 2021, here’s a look at the most popular stories on TechTalks:

The future of deep learning, according to its pioneers

Deep neural networks will move past their shortcomings without help from symbolic artificial intelligence, three pioneers of deep learning argue in a paper published in the July issue of the Communications of the ACM journal.

In their paper, Yoshua Bengio, Geoffrey Hinton, and Yann LeCun, recipients of the 2018 Turing Award, explain the current challenges of deep learning and how it differs from learning in humans and animals. They also explore recent advances in the field that might provide blueprints for the future directions for research in deep learning.

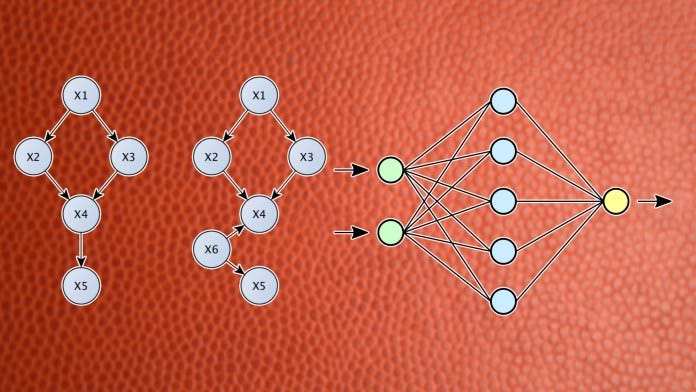

Why machine learning struggles with causality

In a paper titled “Towards Causal Representation Learning,” researchers at the Max Planck Institute for Intelligent Systems, the Montreal Institute for Learning Algorithms (Mila), and Google Research, discuss the challenges arising from the lack of causal representations in machine learning models and provide directions for creating artificial intelligence systems that can learn causal representations.

This is one of several efforts that aim to explore and solve machine learning’s lack of causality, which can be key to overcoming some of the major challenges the field faces today.

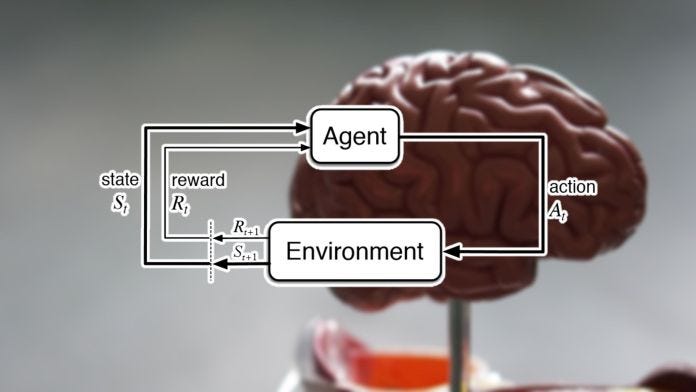

DeepMind scientists: Reinforcement learning is enough for general AI

In a new paper submitted to the peer-reviewed Artificial Intelligence journal, scientists at UK-based AI lab DeepMind argue that intelligence and its associated abilities will emerge not from formulating and solving complicated problems but by sticking to a simple but powerful principle: reward maximization.

Titled “Reward is Enough,” the paper, which is still in pre-proof as of this writing, draws inspiration from studying the evolution of natural intelligence as well as drawing lessons from recent achievements in artificial intelligence. The authors suggest that reward maximization and trial-and-error experience are enough to develop behavior that exhibits the kind of abilities associated with intelligence. And from this, they conclude that reinforcement learning, a branch of AI that is based on reward maximization, can lead to the development of artificial general intelligence.

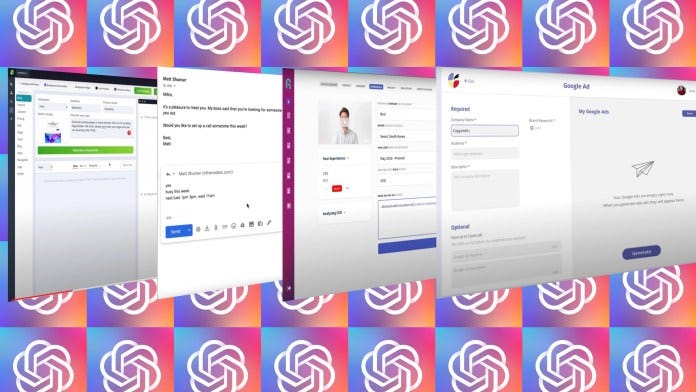

What does it take to create a GPT-3 product?

When Open-AI introduced GPT-3 last year, it was met with much enthusiasm. Shortly after GPT-3’s release, people started using the massive language model to automatically write emails and articles, summarize text, compose poetry, create website layouts, and generate code for deep learning in Python. There was an impression that all types of new businesses would emerge on top of GPT-3.

Eight months later, GPT-3 continues to be an impressive scientific experiment in artificial intelligence research. But it remains to be seen whether GPT-3 will be a platform to democratize the creation of AI-powered applications.

Tesla AI chief explains why self-driving cars don’t need lidar

Tesla has been a vocal champion for the pure vision-based approach to autonomous driving, and in this year’s Conference on Computer Vision and Pattern Recognition (CVPR), its chief AI scientist Andrej Karpathy explained why.

Speaking at CVPR 2021 Workshop on Autonomous Driving, Karpathy, who has been leading Tesla’s self-driving efforts in the past years, detailed how the company is developing deep learning systems that only need video input to make sense of the car’s surroundings. He also explained why Tesla is in the best position to make vision-based self-driving cars a reality.