This small change will make transformers much more efficient

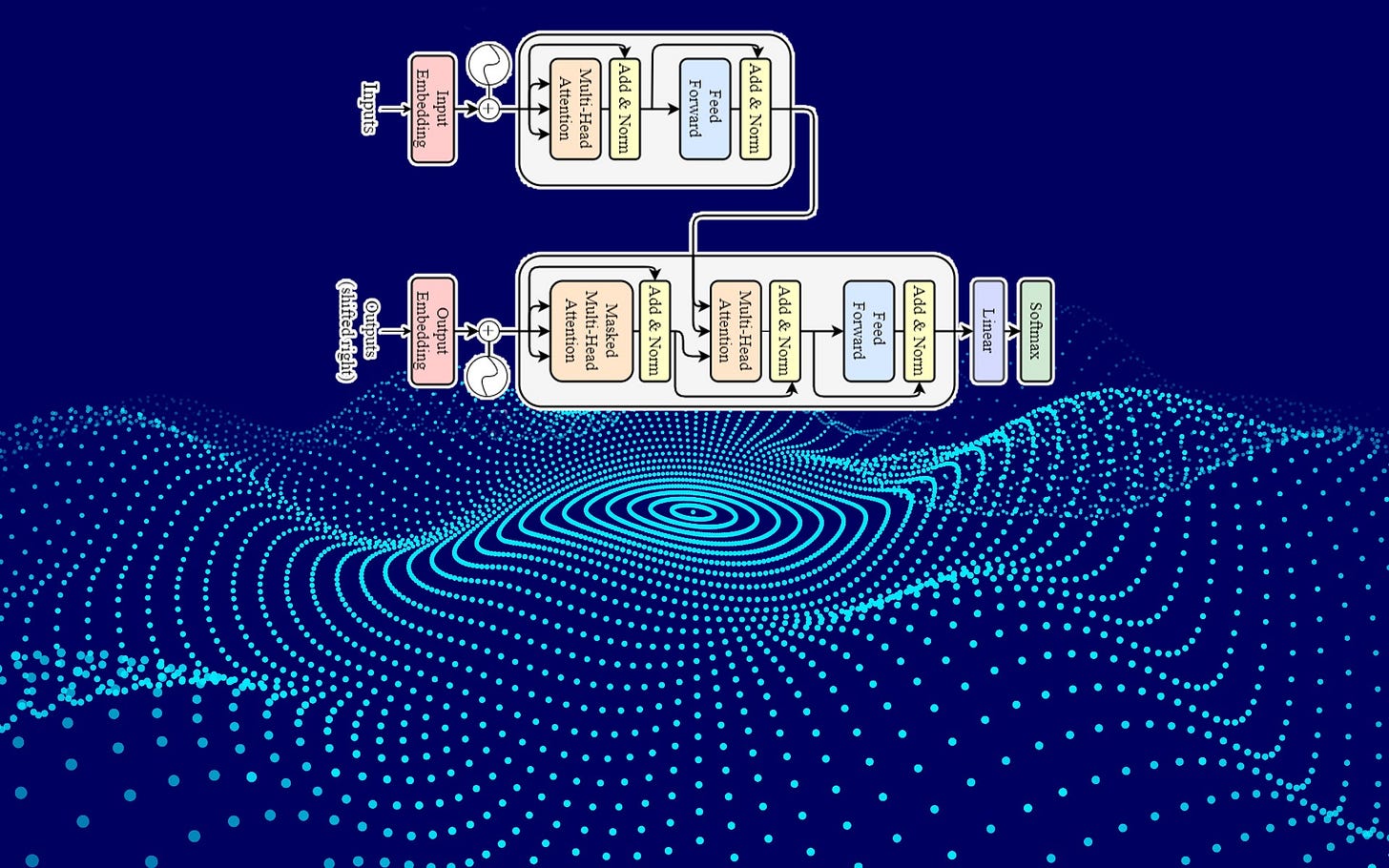

The transformer has been one of the most influential machine learning architectures in recent years. It underlies some of the most advanced deep learning systems, including large language models like OpenAI's GPT-3 and DeepMind’s AlphaFold.

The transformer architecture owes its success to its powerful attention mechanism, which enables it to outperform its predecessors, the RNN and LSTM. Transformer models can process long sequences of data in parallel and in both forward and reverse directions.

Given the importance of transformer networks, there are several efforts to improve their accuracy and efficiency. One of these initiatives is a new research project by scientists at University of Cambridge, University of Oxford, and Imperial College of London, which suggests changing the transformer architecture from deep to wide. While a small architectural change, results show that this modification provides significant improvements in the speed, memory, and interpretability of transformer networks.

Read the full article on TechTalks.

For more on AI research: