What happens when LLM in-context learning scales to thousands of shots

As the context windows of LLMs expand to millions of tokens, new properties emerge in the models. In their latest study, researchers at Google DeepMind experimented with "many-shot in-context learning" (ICL).

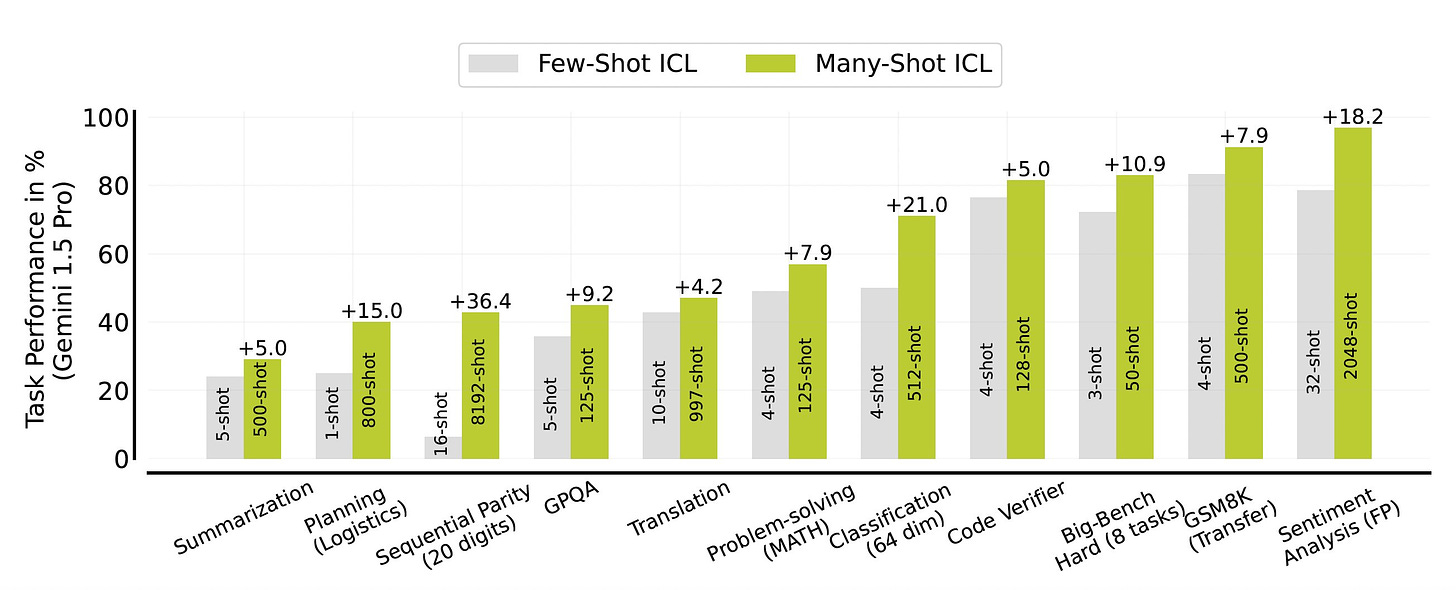

According to their findings, when you fit hundreds and thousands of ICL examples into the prompt, you can continue to improve the model's performance on tasks that can't be solved with few-shot ICL and usually require fine-tuning.

They also introduce two techniques to reduce the need for human-generated data:

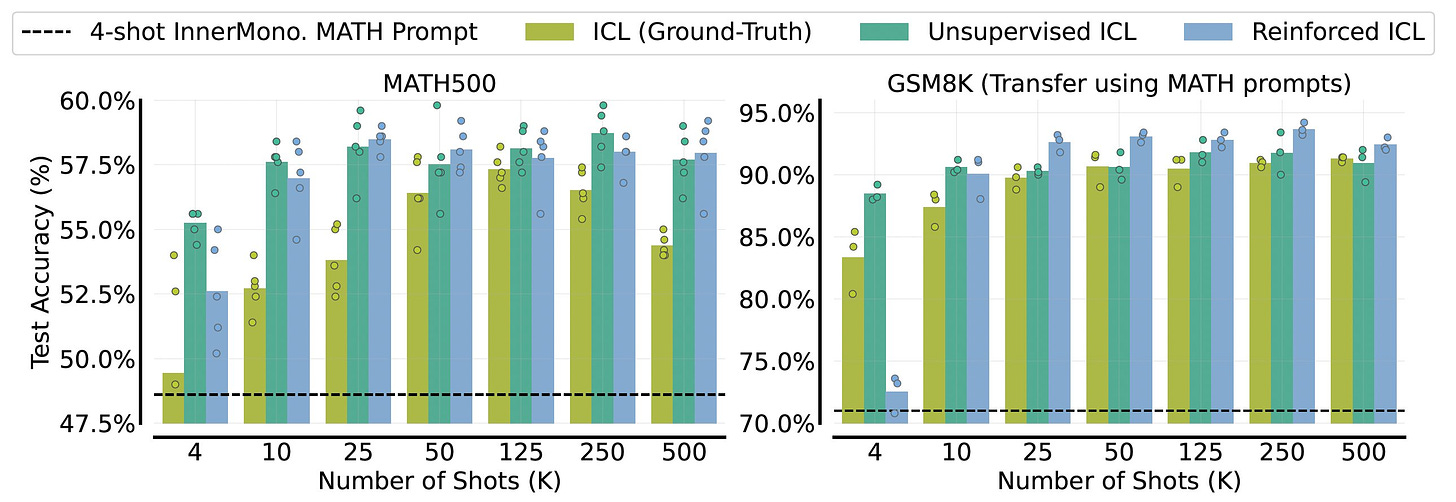

1- Reinforced ICL uses LLMs to generate reasoning chains instead of human responses. This makes it easier to create ICL examples for problems where human-annotated data is scarce.

2- Unsupervised ICL fills the prompt with unsolved examples to activate the model's inner knowledge of the topic. This is useful when the model has knowledge about the problem but needs a stimulus to focus on its learned concepts.

Another interesting finding about many-shot ICL is its ability to change the model's learned biases.

Does many-shot ICL obviate the need for fine-tuned models? No, but it will be an important tool that will make it easier for developers to iterate over prototypes of LLM applications.

Read all about many-shot ICL on VentureBeat.

Read the paper on Arxiv.