Why large language models fail at logical reasoning

Even before the recent craze about sentient chatbots, large language models (LLM) had been the source of much excitement and concern. In recent years, LLMs, deep learning models that have been trained on vast amounts of text, have shown remarkable performance on several benchmarks that are meant to measure language understanding.

Large language models such as GPT-3 and LaMDA manage to maintain coherence over long stretches of text. They seem to be knowledgeable about different topics. They can remain consistent in lengthy conversations. LLMs have become so convincing that some people associate them with personhood and higher forms of intelligence.

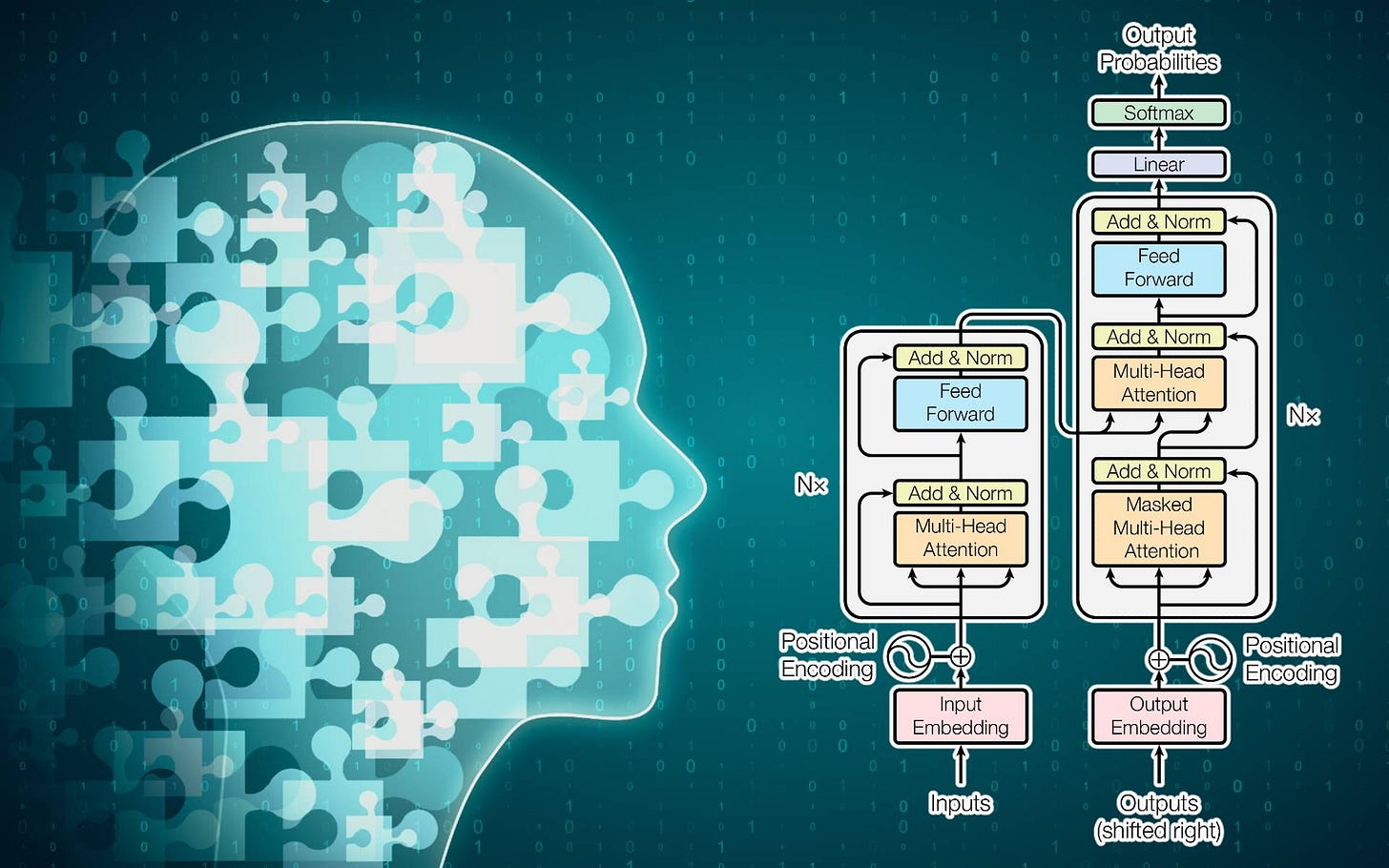

But can LLMs do logical reasoning like humans? According to a research paper by scientists at the University of California, Los Angeles, transformers, the deep learning architectures used in LLMs, don’t learn to emulate reasoning functions. Instead, they find clever ways to learn statistical features that inherently exist in the reasoning problems.

The researchers tested BERT, a popular transformer architecture, on a confined problem space. Their findings show that BERT can accurately respond to reasoning problems on in-distribution examples in the training space but can’t generalize to examples drawn from other distributions based on the same problem space.

Their work highlights some of the shortcomings of deep neural networks as well as the benchmarks used to evaluate them.

Read the full article on TechTalks.

For more on AI research: